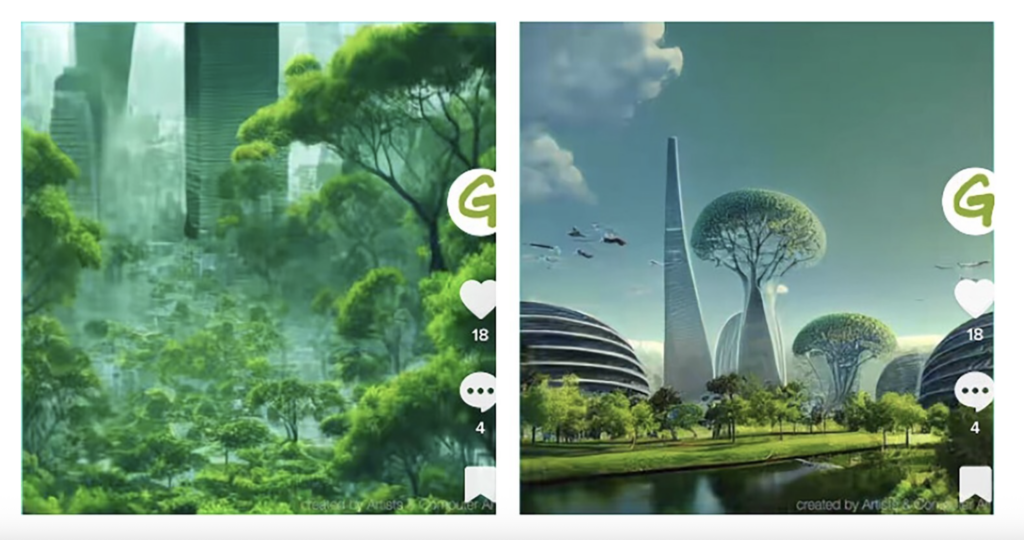

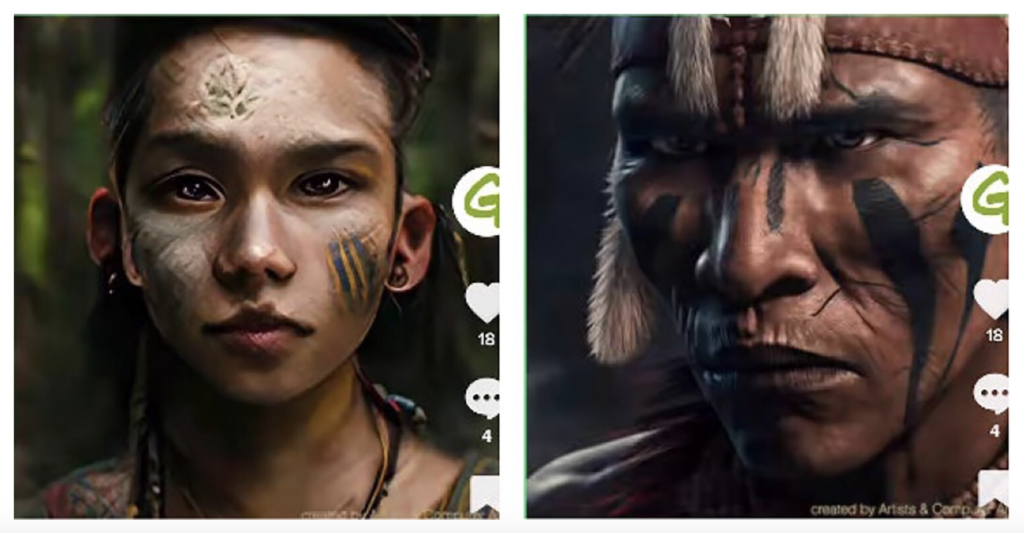

Image, above: AI-generated imagery showing humanoid and Afrofuturist representations of people of the future. Images originally created by Greenpeace and republished in an Open Access article by Ella Muncie in the journal Environmental Communication. This screenshot of the Greenpeace images from Figure 2 of Muncie’s paper is distributed under the terms of the Creative Commons 4.0 International Attribution License.

Artificial intelligence for images is changing visual storytelling in ways we have never seen before. You can imagine something in your mind, type a few prompts, and within seconds, your vision becomes AI-generated art. A living city, a glass forest, a pixelated version of the world. It feels like magic. Well, maybe not. Because behind every beautiful image lies a very concerning question: whose imagination created this?

To create images, AI systems often use diffusion models, which transform text prompts into visuals. These models are trained on massive datasets of images, captions, and related metadata. Once the model learns the relationships between text and visual features, it can generate new images in response to a text prompt.

As the accuracy of AI-generated images improves, their potential usefulness in fields such as science communication and advocacy continues to grow. However, there are also limitations, since AI-generated content relies on pre-existing image datasets that often reinforce and amplify white colonial perspectives.

A 2025 article published in Environmental Communication explored this tension by examining Greenpeace’s 2020 “Alternative Futures” initiative, one of the first environmental campaigns to use artificial intelligence for creative activism. During an interview with the study’s author, Ella Muncie, she explained that the study stemmed from her PhD research on AI in environmental communication. She described stumbling upon Greenpeace’s use of AI and being intrigued by “how different and new” it was for advocacy work. Since then, she has continued this line of inquiry through a postdoctoral fellowship exploring how organizations like Friends of the Earth and the Wildlife Trusts are adopting or resisting AI in their campaigns.

The Experiment

Greenpeace International’s Alternative Futures campaign invited young people around the world to imagine what a just and sustainable future could look like. One of its flagship events, called #DareToDream, took place in Jakarta, where participants were asked to describe, in 12 words, the future they wanted to live in. Those words were entered into Midjourney, an AI art generator, which produced digital images projected across public screens and shared online as a gallery of collective hopes and fears about climate change.

To explore how meaning and representation emerged from this process, Muncie conducted an online ethnography. This qualitative research method that examines how people behave, communicate, and construct meaning in digital environments such as social media platforms, online communities, and virtual spaces. She gained access to more than 500 campaign documents, including design briefs, planning files, and social media posts. Muncie also analyzed hundreds of AI-generated images and TikTok videos created during the Jakarta event to examine how color, tone, and visual rhythm shaped the meanings of the AI images.

According to Muncie, the reaction of participants at the Jakarta event reflected the appeal of the technology rather than the cultural accuracy. She said the system relied on training data dominated by visual references from the West, which led many outputs to resemble generic metropolitan or sci-fi scenes rather than the very Indonesian environment.

“Most spoke very positively; they thought AI was capturing exactly what they imagined,” Muncie said. “But in reality, many images did not reflect Indonesia at all. They looked generic, more like Western skylines or fantasy cities. Even when the prompts were culturally specific, the algorithm pulled from a limited set of visual references. The excitement came from novelty rather than authenticity.”

What They Found

While the campaign aimed to highlight diversity and inclusion, many of the AI-generated images leaned on familiar global media patterns. Indigenous people appeared in romanticized scenes. Natural environments were presented as untouched wilderness. Urban spaces looked futuristic and oddly empty. In short, the technology created beautiful images that did not even reflect anything natural. The study found that Greenpeace’s AI imagery produced visuals that looked hopeful but felt detached from cultural reality.

Even when the campaign aimed to showcase communities of color or marginalized voices, the algorithm’s training data favored Western aesthetics, leading to unrealistically polished images. This imbalance has direct consequences for access to environmental communication, who is represented, and whose realities feel possible within AI-generated activism, Muncie explained.

“The data that trains AI systems often comes from sources embedded with certain values and priorities rooted in a white, male worldview. That means marginalized voices are often excluded, which is a major issue in environmental communication,” she said.

Muncie also mentioned a practical risk that environmental organizations must prepare for. As generative systems improve at producing photorealistic scenes, the line between real and synthetic imagery becomes harder to see. That opens the door for mistakes that could harm credibility.

“As AI becomes more advanced, it learns what makes an image look real,” she said. “Organizations could easily repost an AI-generated photo, thinking it is authentic, only to find out later that it is fake. For environmental organizations, that could seriously undermine public trust.”

The study tools also highlighted the moral and ecological burdens they entail. Large generative models require significant computational resources, which in turn tie them to energy consumption and water use, as well as the mineral extraction needed to build the technology itself. These impacts often fall on the same regions that environmental justice movements try to protect. Muncie said this creates a tension that environmental groups must acknowledge.

“AI systems consume huge amounts of water and energy, which is ironic for organizations advocating sustainability,” Muncie said. “If you are campaigning for decarbonization but using a carbon-intensive technology, your values are not aligning with your message.”

In this sense, the campaign’s use of AI to promote sustainability revealed the irony of relying on a resource-intensive technology to advocate for ecological balance.

What It Means for Environmental Communicators

This study challenges the growing enthusiasm for AI as a storytelling tool. For environmental organizations, technology offers new ways to visualize ideas and reach global audiences, but it also brings new responsibilities. The findings suggest that while AI can amplify imagination, it can also flatten meaning. As Muncie put it, “AI can make campaigns faster and more efficient, but efficiency should not replace authenticity.” She believes AI works best as a creative assistant rather than a creator, helping with textual or campaign-efficiency tasks but not replacing human perspective.

For communicators, the lesson is not to abandon technology but to use it critically. Muncie emphasizes the importance of understanding how algorithms learn, the data they draw from, and the cultural assumptions they encode. Without that awareness, campaigns risk reproducing inequality even as they claim to resist it. Greenpeace’s experiment represents both the promise and the peril of the digital turn in activism. The visuals captured attention and sparked discussion, but they also exposed how easily technological tools can mirror the very hierarchies they aim to dismantle. Muncie argued that future advocacy should bring in diverse voices into the development of AI itself, allowing communities to shape how their realities and languages are represented.

“Responsible use starts with involving diverse voices in the development and use of AI,” Muncie said. “It cannot just be built around Western ways of thinking. We need to bring in different languages, knowledge systems, and cultural understandings so that people have real agency in how the technology represents them.”

Bottom Line

Artificial intelligence has expanded the boundaries of creativity, but it has also blurred the line between innovation and imitation. The Greenpeace study shows that machines can help us imagine the future, but not necessarily understand it. The images they create may dazzle the eye, yet they often miss the cultural depth that gives human storytelling its power.

For environmental communicators, AI can assist, but it cannot replace the authenticity of human experience, which is built on authentic voices, real communities, and real imagination.

Read the full study:

Muncie, E. (2025). Artificial Intelligence and New Voices in Environmental Campaigning. Environmental Communication, 1–20. https://doi.org/10.1080/17524032.2025.2554988

Abdulmalik Adetola is a master’s student in the Media Innovation and Journalism program at UNR and a graduate assistant for the Hitchcock Project. He is a passionate advocate for health literacy and digital communication, dedicated to promoting accurate and accessible health information in the modern age.